Umwelt: Accessible Structured Editing of Multi-Modal Data Representations

ACM Human Factors in Computing Systems (CHI), 2024 DOI

Jonathan Zong

MIT CSAIL

Isabella Pedraza Pineros

MIT CSAIL

Mengzhu (Katie) Chen

MIT CSAIL

Daniel Hajas

University College London

Arvind Satyanarayan

MIT CSAIL

Abstract

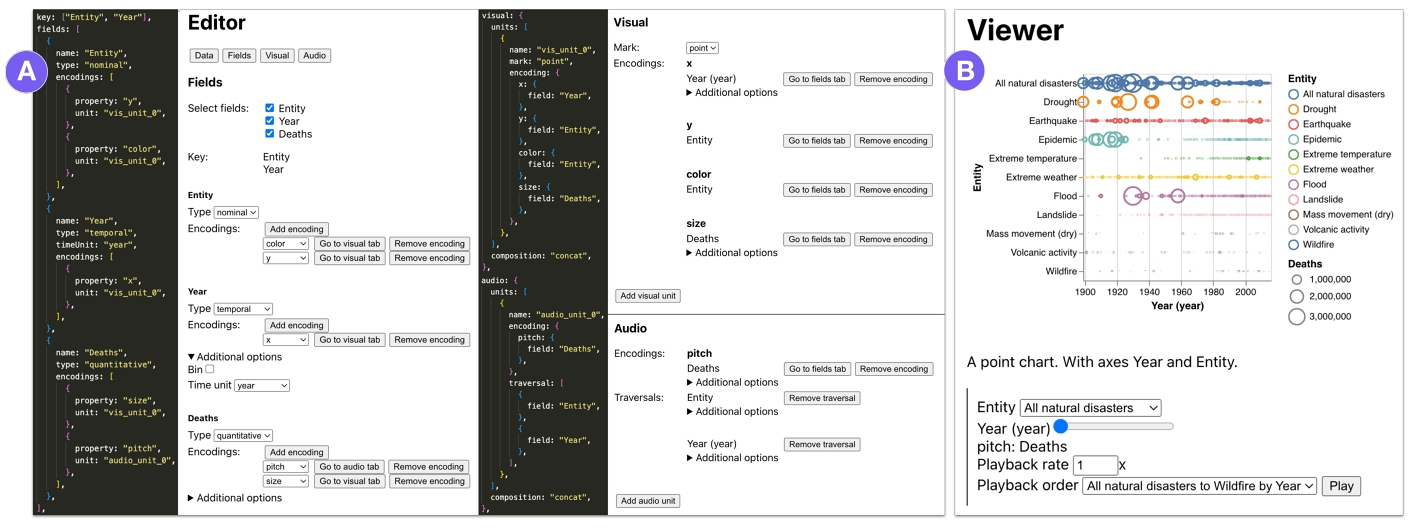

We present Umwelt, an authoring environment for interactive multimodal data representations. In contrast to prior approaches, which center the visual modality, Umwelt treats visualization, sonification, and textual description as coequal representations: they are all derived from a shared abstract data model, such that no modality is prioritized over the others. To simplify specification, Umwelt evaluates a set of heuristics to generate default multimodal representations that express a dataset’s functional relationships. To support smoothly moving between representations, Umwelt maintains a shared query predicate that is reified across all modalities — for instance, navigating the textual description also highlights the visualization and filters the sonification. In a study with 5 blind / low-vision expert users, we found that Umwelt’s multimodal representations afforded complementary overview and detailed perspectives on a dataset, allowing participants to fluidly shift between task- and representation-oriented ways of thinking.

Bibtex

@inproceedings{2024-umwelt,

title = {{Umwelt: Accessible Structured Editing of Multi-Modal Data Representations}},

author = {Jonathan Zong AND Isabella Pedraza Pineros AND Mengzhu (Katie) Chen AND Daniel Hajas AND Arvind Satyanarayan},

booktitle = {ACM Human Factors in Computing Systems (CHI)},

year = {2024},

doi = {10.1145/3613904.3641996},

url = {https://vis.csail.mit.edu/pubs/umwelt}

}