Abstraction Alignment: Comparing Model-Learned and Human-Encoded Conceptual Relationships

ACM Human Factors in Computing Systems (CHI), 2025 DOI

Angie Boggust

MIT CSAIL

Hyemin (Helen) Bang

MIT CSAIL

Hendrik Strobelt

IBM Research

Arvind Satyanarayan

MIT CSAIL

Abstract

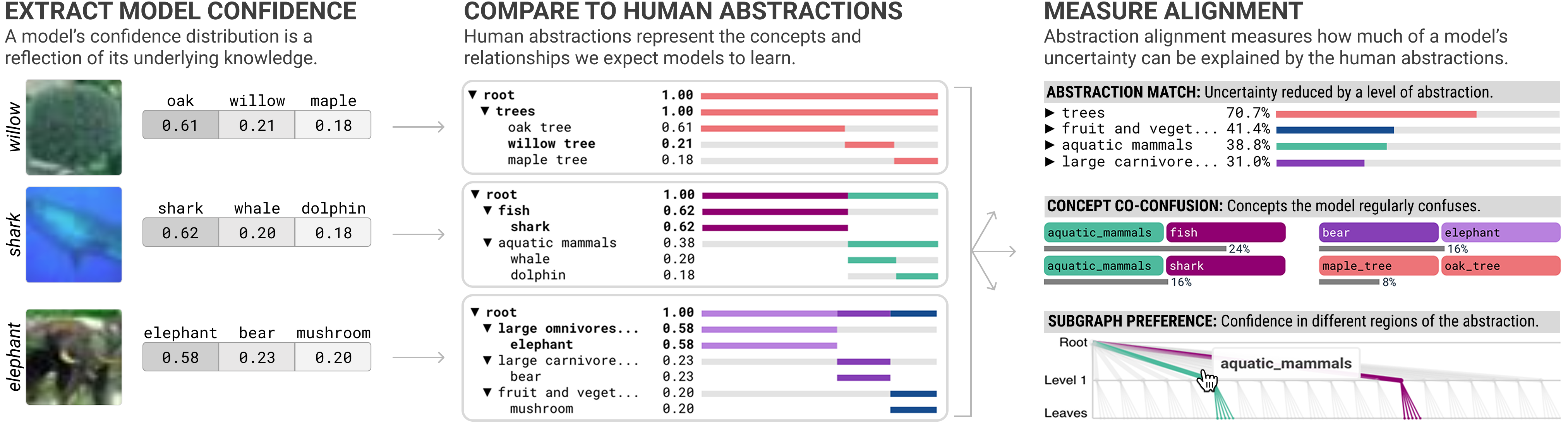

While interpretability methods identify a model’s learned concepts, they overlook the relationships between concepts that make up its abstractions and inform its ability to generalize to new data. To assess whether models’ have learned human-aligned abstractions, we introduce abstraction alignment, a methodology to compare model behavior against formal human knowledge. Abstraction alignment externalizes domain-specific human knowledge as an abstraction graph, a set of pertinent concepts spanning levels of abstraction. Using the abstraction graph as a ground truth, abstraction alignment measures the alignment of a model’s behavior by determining how much of its uncertainty is accounted for by the human abstractions. By aggregating abstraction alignment across entire datasets, users can test alignment hypotheses, such as which human concepts the model has learned and where misalignments recur. In evaluations with experts, abstraction alignment differentiates seemingly similar errors, improves the verbosity of existing model-quality metrics, and uncovers improvements to current human abstractions.

Bibtex

@inproceedings{2025-abstraction-alignment,

title = {{Abstraction Alignment: Comparing Model-Learned and Human-Encoded Conceptual Relationships}},

author = {Angie Boggust AND Hyemin (Helen) Bang AND Hendrik Strobelt AND Arvind Satyanarayan},

booktitle = {ACM Human Factors in Computing Systems (CHI)},

year = {2025},

doi = {10.1145/3706598.3713406},

url = {https://vis.csail.mit.edu/pubs/abstraction-alignment}

}